BLUF: For hardware running at 26Gbaud (e.g. 400G-DR8), it’s likely existing COTS parts could be reassembled to tap a network. COTS parts that advertise 53 Gbaud (e.g. 400G-DR4, 800G-DR8) compatibility do not exist, and we are uncertain about the difficulty of tapping these links. In the worst-case scenario, we need new hardware that has not been designed yet.

In either case, R&D is required to create a reliable line-speed setup, but for the 53 Gbaud and 106 Gbaud cases, we may need as much as a new ASIC tape-out.

Background

Data signals inside servers are electrical. These are often converted into optical signals for transmission over longer distances, e.g. between servers. This represents the vast majority of signals that we are interested in tapping.

Information is sent through an optical fiber by modulating the power output of a laser. The difference in laser power between the peaks and troughs of the modulation scheme encodes the data. We refer to a single fiber carrying data in this way as a “lane”.

The most common modulation schema (PAM4) uses four modulation levels. At a symbol rate of 53 GBaud, this gives a lane bandwidth of 106 Gbit/s. These are generally referred to as 100G lanes.

Faster speeds are achieved by increasing the number of modulation levels, or the symbol rate. Network links are often made up of multiple lanes - e.g. a 400G link could be made up of 4 parallel 100G channels (in each direction).

The type and number of lanes is specified in a Physical Medium Dependent (PMD) specification. An example would be 800G-DR8:

- 800G = total link bandwidth

- D = 500m reach class (as opposed to S=short, F=far, L=long, E=extended)

- R = uses PAM4 modulation (4 modulation levels)

- 8 = 8 optical lanes

8 optical lanes for 800G means we have 100G lanes. 100G lanes using PAM4 must be operating at 53 Gbaud.

800G-DR8 is by far the most common type of 800G link in datacenter networks. The transition to 1600G links in 2026 will see PMDs like 1600G-DR8 operating at 106 GBaud becoming the new standard.

We concentrated on:

-

Passive optical taps: Optical links are modified to have a light splitter inline, and some light is diverted to the verifying device, which decodes it.

-

Active optical taps: Optical signals are converted back to electrical signals, which are duplicated and sent to the original destination and to the verifying device.

Passive Optical Taps

A passive optical tap splits an optical network signal, dividing the light between a monitoring leg and the live leg.

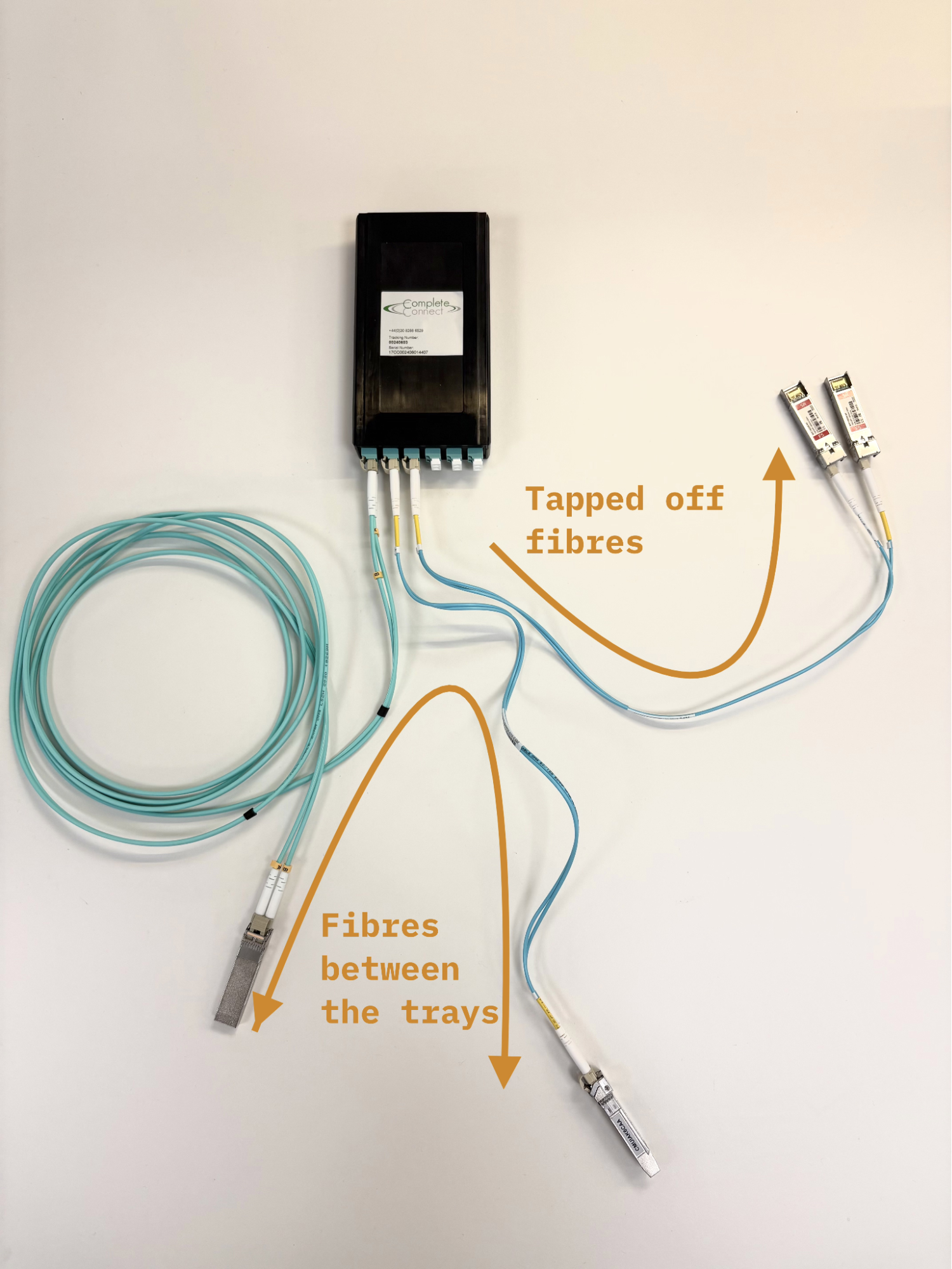

Example 10G network tap

At the receive end of the link the light is “demodulated”, i.e. the light is interpreted as a stream of bits. One measure of signal strength is the Optical Modulation Amplitude (OMA). If the received OMA is too small, then the different modulation levels are sometimes decoded incorrectly. The rate of incorrectly transferred bits is called the Bit Error Rate (BER).

The link budget is the allowable loss of optical power before the BER sharply increases1. The received optical power must be more than the optical sensitivity of the receiver for the link to function. The “link margin” represents how much room there is for additional losses in a link before the link breaks.

A passive tap splits the optical power between two destinations, the live and monitoring legs. We take these in turn to see what our requirements for each are.

Live leg

We think this is a realistic power calculation for an 53 Gbaud fiber link (as used in e.g. 800G-DR8 or 400G-DR4 links). We use dBm for the absolute power of the light signal (a logarithmic unit), and dB for the relative changes in that signal’s strength.

| Example power calculation | |

|---|---|

| Transmitter OMA Power | -0.8 dBm |

| Losses due to fiber interfaces | -1.4 dB (-0.35 dB for each of the four interfaces for a tapped fiber line) |

| Losses due to 500m fiber | -0.25 dB |

| Received OMA Power | -2.45 dBm (-0.8 dBm - 1.4 dB - 0.25 dB) |

| Live Leg Receiver Sensitivity | -3.9 dBm |

| Live Leg Link Margin | 1.45 dB (-2.45 dBm - (-3.9 dBm)) |

Unfortunately, a positive link margin doesn’t guarantee a functional link. Due to imperfections, such as dust in the interconnects, manufacturing variance and laser degradation, the actual received OMA has a large variance. For these links, even a margin of 1.45dB (which represents a 40% safety factor) is considered tight.

In reality, the power received at the destination transceiver has a broad probability distribution, with some individual links having a larger margin, and some smaller.

Different passive optical network taps have different split ratios (ratio of optical power that goes to the live versus monitoring leg). We convert these split ratios into “losses” that each leg sees, i.e. how much light is “lost” simply because it is being sent to the other leg.

| Split (live leg % / monitor leg %) | Live leg loss | Monitor leg loss |

|---|---|---|

| 50/50 | -3 dB | -3 dB |

| 80/20 | -1 dB | -7 dB |

| 90/10 | -0.5 dB | -10 dB |

| 95/5 | -0.25 dB | -13 dB |

We have discussed this with a manufacturer of passive optical taps, who are confident that non-extreme split ratios (near 50:50) should work for 53 Gbaud links, if care is taken to control dust, etc. However, optical taps that target 53 Gbaud links are not currently available, let alone 106 Gbaud, and they had not done any testing with these faster links.

Our calculations and research suggested that even a 1 dB loss may be unacceptable. This is something we are significantly uncertain about, and plan to do testing to confirm which is true.

In the rest of this analysis we assume the worst case scenario of needing the 95/5 split ratio.

Note on slower links: In 26 GBaud links (e.g. 400G-DR8), the typical optical link budget margin is large enough to support standard optical tapping with e.g. 70/30 split ratio. The optical taps are already common COTS parts. We’re setting up a Profitap 400G tap on our servers to demonstrate this.

Monitor leg

If we need a 95/5 split ratio to make the live leg work, then the monitor leg is receiving a very small amount of power. We cannot use the same type of transceiver as is used in the live leg of the link without an additional optical amplifier as the signal is too small to be resolved.

The following table assumes -0.8 dBm transmit power and 1.65 dB of optical losses, in addition to the 10 dB or 13 dB due to the 90/10 or 95/5 split respectively.

| Approach | Receiver Sensitivity | Link Margin (90/10) | Link Margin (95/5) |

|---|---|---|---|

| SOTA PIN receiver | -9.7 dBm | -2.75 dB (fail) | -5.75 dB (fail) |

| APD receiver | -15.1 dBm | 2.65 dB (pass) | -0.35 dB (significant proportion fail) |

| BDFA amplifier | -33.9 dBm2 | 21.45 dB (pass) | 18.45 dB (pass) |

To make up for this, we need to install either amplifiers or more sensitive receivers.

- More sensitive receivers can increase the available link margin: State-of-the-art PIN receivers (-9.7 dBm sensitivity), APD receivers (-15.1 dBm sensitivity).

- Amplifiers. Bismuth-Doped Fiber Amplifiers (BDFAs) can recover 30dB. They are useful in recovering a weak signal.

One note is that the idea of solving the loss problem by installing more sensitive receivers is not necessarily a long-term solution to optical tapping. The reason being that, if there are reliable, more sensitive receivers, then a data center is likely to adopt these, up their lane bandwidth, and we’re back to square one of needing a new generation of sensitive receivers on the monitor leg.

We can, however, if available and reliable, install more sensitive receivers on the monitor leg in legacy data centers.

How to build this

To make the passive taps described, we need:

-

2x extra fibre patch cables (one for each leg). This is easy.

-

Monitor server. We’ll need a lot of NICs and servers to capture all of this data. At most, we need a 1:1 matching of data center NICs to verifier monitor NICs.

-

Amplifier/sensitive receiver setup for the monitor leg. Not commercially available. They’re not impossible to develop, but the research and development do need to happen if we deem these parts necessary.

-

95/5 optical tap. There are no optical taps that claim to be capable of handling the latest standards (e.g. 1600G-DR8). However, given that the physical medium is the same as for slower links, we expect the COTS solutions to be capable already.

Having spoken to optical tap manufacturers, we are now hopeful that 53GBaud may be achievable with a 50:50 split. They believe that if you carefully manage the dust, then these speeds won’t be a problem. We’re yet to test this and will publish updates as we do.

Active Optical Taps (Optical-Electrical-Optical)

Rather than simply dividing the optical power between the live and monitor leg, we can instead make a tap that converts the optical signal into an electrical signal, divides it in the electrical domain, and then converts it back to an optical signal.

Given that we are demodulating and then remodulating our signal, we do not have the same optical power concerns as in the passive optical tap case.

The core parts to make this are standard pluggable optical-electrical transceivers plus retimers configured in 1:2 fan-out.

Designing these boards isn’t a trivial engineering task, but it is a known one.

Advantage: No signal degradation.

Disadvantages: Latency addition (~20ns), 10s of watts required per tap, active electronics add an attack surface, reducing trust from both prover and verifier.

The retimer ASIC exists for 53 GBaud lanes (from several suppliers) and we believe Broadcom manufactures one for 106 GBaud links. If this is not the case, a specialized ASIC manufacturer (e.g. Broadcom) would need to do this ASIC design/tape out, however we are confident that this is feasible in terms of design complexity.

If we want this option on the table, then OEO taps need to be designed and tested as soon as possible. We have a more detailed technical scoping that covers the things we’d need to do to deliver prototype active OEO taps for 26 GBaud; this may be available on request.

Component failure

This type of tap introduces extra transceivers and the fan-out retimer into the live data path. This introduces extra failure points, which would reduce the reliability of the links that are being tapped - if one of these components fails, the live link goes down.

We expect the main source of unreliability to be in the transceivers that are being introduced - we are doubling the number of transceivers in the hot path, and hence doubling the rate of failure. This could be significant.

How to build this

There are easily obtainable parts at 26 Gbaud (e.g. the TI DS560DF810). At 53 Gbaud and 106 Gbaud, there are several parts from multiple manufacturers, but chip access is restricted to very large minimum order quantities (TI confirmed they don’t have 106 Gbaud on their roadmap). This will be a difficult high-speed electronic design problem, but it is a task that all switch, mobo, and NIC providers have previously completed.

- Transceivers x4. This is easy.

- 2x extra fiber patch cables (one for each leg). This is easy.

- Monitor server. As before.

- Active optical-electrical-optical tap (aka retimer board). Not commercially available and needs to be developed by a large electronics company.

Alternative Tap Concepts

Electrical tap (aka OSFP/QSFP interposer)

Using similar technology to the OEO tap, but rather than tapping the fiber, we can tap a point in the network that is already electrical. For example, we could make an interposer that goes into the transceiver’s electrical interface (the OSFP or QSFP-DD port) port, electrically tapping the signal between the port and the transceiver.

As the transition to co-packaged optics (CPO) happens, this option may not be feasible for all link terminations. For at least the next few years we expect that CPO will happen on the switch ends of the links, and not the node/GPU ends, which would keep this option open. Compared to an OEO tap, electrical taps:

- Add half the number of transceivers

- Have more space constraints as it is closer to the GPU. This includes distance between each SFP port, space for heat dissipation, mechanical pressure on the SFP port.

- Might have some tamper-resistance built in as it populates the network ports (and hence could detect replugging/reconfiguration)

Use existing components

NICs and switches both see all the network packets passing through. Modern switches have built-in capacity to copy traffic to a monitoring port (port mirroring). Unfortunately, switches won’t handle complete copying at 800G or even 400G; they’ll drop or sample packets, making an incomplete record. Additionally, the switch is normally controlled by the prover, rather than the verifier, resulting in a trust issue.

If we just want to sample packets, rather than get a complete log, then it could be worth understanding the limits of switch port mirroring.

Lower the network bandwidth

If we cannot tap fast lanes, then can we mandate that the network runs at a tappable speed instead? To run the network slower there are three levers:

- Lower the baud rate

- Change the encoding (e.g. PAM4 down to PAM2)

- Use fewer lines

We only get more link margin by doing 1 and 2. It may be that the transceiver manufacturers can change the baud rate by updating their firmware, but it’s also possible that the chip design prevents this.

Additionally, by running very slowly, it may be possible that approaches like packet mirroring in the switch are possible.

MEMS mirrors

Google use optical switches, MEMS mirrors that direct the optical packets. A similar system could be used to sample network packets, taking, say, one in every 1000 packets.

This could be done with Optical Circuit Switching as implemented in Google’s TPU Pod architecture. They have mirrors working as switches, which can redirect all packets. However, the switching speed is relatively slow (1-10s of ms), so we are nowhere near fast enough to sample just a single packet (it could be on the order of sampling 1,000,000 in every 1,000,000,000).

This would be a whole R&D project, but Google’s work is an existence proof of the tech.

Conclusions

Network taps for cutting-edge datacenters are physically possible, but we are unsure if they can be installed tomorrow. If we expect network taps to be needed as part of a verification program, then R&D needs to start now to have all the pieces in place.

Acknowledgements

Thank you to Naci Cankaya for sharing early versions of his related work and comments on our writeup.

Footnotes

-

This happens due to the Forward Error Correction (FEC) algorithm exceeding a threshold where it can no longer correct enough single-bit errors. The sensitivity is defined as the optical power at which the pre-FEC bit error rate is 24 bit errors per 100k bits. ↩

-

This is the sensitivity taking into account the amplification of the BDFA. Note that this sensitivity likely would not be achievable due to the noise added by the BDFA. ↩