Replacing NICs with DPUs puts a trusted OS at the network boundary, able to enforce security policies. We used a DPU as a tray-level bandwidth limiter — an extra layer of security for protecting model weights. In building this proof of concept, we showed that DPUs can encrypt at near-line rate with multi-peer tunnels. This also enables new security features in production environments, e.g. network taps and enforcing filters on illegitimate data flows.

Why

AI model weights are valuable, both in a financial and national security sense, and we only expect this to become more important. One useful property is that model weights are very large (>1Tb). That size works in our favour. We can restrict bandwidth to slow down the exfiltration of a model. Last year, Anthropic moved to ASL-3 and imposed whole data centre level egress bandwidth limits:

One control in particular, however, is more unique to the goal of protecting model weights: we have implemented preliminary egress bandwidth controls. Egress bandwidth controls restrict the flow of data out of secure computing environments where AI model weights reside. The combined weights of a model are substantial in size. By limiting the rate of outbound network traffic, these controls can leverage model weight size to create a security advantage. When potential exfiltration of model weights is detected through unusual bandwidth usage, security systems can block the suspicious traffic. Over time, we expect to get to the point where rate limits are low enough that exfiltrating model weights before being detected is very difficult—even if an attacker has otherwise significantly compromised our systems. Implementing egress bandwidth controls has been a forcing function to understand and govern the way in which data is flowing outside of our internal systems, which has yielded benefits for our detection and response capabilities. — Anthropic

Bandwidth limiting is one layer of defence in depth. DPUs can provide others (not implemented in our proof of concept):

- Weight transfer policy enforcement. The DPU can understand storage protocols and block transfers that look like bulk weight data leaving the cluster, while allowing legitimate small flows.

- Outbound traffic classification. All traffic passes through the DPU, so it can classify and label every outbound flow.

- Network tapping for AI verification. The DPU sees all traffic metadata, providing a surface for auditing what the cluster communicates with.

- Encryption key revocation on tamper events. Keys live on the DPU, not the host. They can be revoked instantly if tampering or other security events are detected.

The NIC as the Bandwidth Boundary

To add another level of security beyond Anthropic’s system, we’ve enforced bandwidth limits on the NIC level. All the traffic in and out of a node goes through a NIC. This therefore creates a clean bandwidth boundary which can enforce the other security policies that DPUs enable.

Enforcing a smaller boundary than ours is difficult. In the GB200 network topology (see diagram) the GPUs are connected to GPUs in other nodes through NVSwitches without any NICs on the network path. It is hard to enforce bandwidth limits on NVSwitches as there are often 20 of them connected in parallel, requiring only one or two to be compromised to bypass the limit. For the H100 network topology all intra-node communication happens through NICs and so our system would be on the node level there. We can also imagine boundaries at multiple levels in-between datacenter and POD levels.

Bandwidth limiting at the tray level (in our case, on the NIC) has some good features:

- Fine-grained control. The controller can set exact bandwidth limits for each connection based on knowledge about what throughput each workload requires. This can allow for the bandwidth in and out of the datacenter as a whole to be higher if required.

- Physical access protection. With datacenter-level bandwidth limiting, someone with physical access to the internal network can plug into a switch and exfiltrate data to a device. With tray-level limiting, every server’s only network connection goes through its DPU. Physically bypassing that requires pulling out a tray.

- Protection against rewiring. Each DPU encrypts all inter-node traffic using keys that the host can’t access. If someone physically reconnects a cable to a different port or device, the receiving DPU won’t accept the traffic — it doesn’t have the right tunnel keys.

Note: we have not yet fully analysed resilience to a single compromised DPU within the mesh.

There are also some downsides:

- Higher friction operation. Limiting the ability to move weights around the data centre adds another layer of friction. This system has flexible bandwidth control which could be used to ease this friciton, but add in further attack surface.

- More effort to implement. You need a DPU for each GPU, and one for the tray as a whole.

The NIC-level boundary is harder to implement, but it opens up new opportunities for workload verification, rule enforcement, plus defence in depth.

What we built

We built a per-node bandwidth limiter by replacing each tray’s NIC with a BlueField 3 DPU. Each DPU enforces per-destination rate limits and encrypts all inter-node traffic. With hardware-accelerated encryption, they reach 193 Gbps — near line rate for 400G Ethernet. A central controller manages the bandwidth limit over a separate management network.

Requirements that we set out with:

- Work at line speed. Whilst we wanted to be able to clamp bandwidth, we also wanted to provide a permissive system that could work with the high-bandwidth communication needed for some AI workloads. With authorisation, we wanted the system to be able to ramp up to high speeds.

- Node-to-node encryption. Encrypted tunnels ensure that traffic can only flow between authorised DPUs. Without them, a compromised host could send data — within its bandwidth quota — to a rogue device planted on the network.

- Minimal trust. We managed to create a system where only the DPUs and a controller node needed to be trusted to enforce bandwidth limits. The DPU cannot be reconfigured by a compromised host.

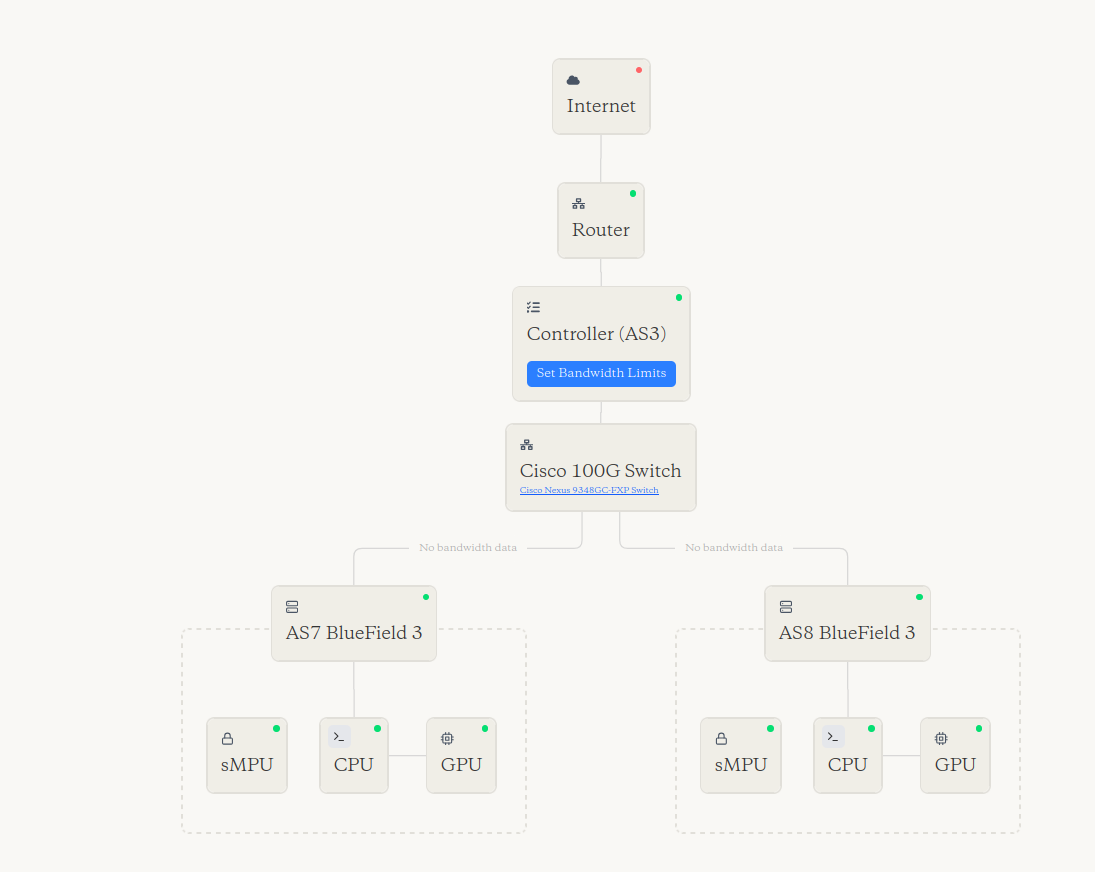

The monitoring UI. This also gives an overview of how we integrated the BlueFields into our small datacenter. AS3, AS7 and AS8 are the names of 3 different servers in our system.

The monitoring UI. This also gives an overview of how we integrated the BlueFields into our small datacenter. AS3, AS7 and AS8 are the names of 3 different servers in our system.

The key components of our solution are:

- The hosts: CPUs+GPUs, responsible for the AI workloads.

- The DPUs (BlueFields): act as NICs, enforce the bandwidth limits and encrypt/decrypt the traffic.

- The controller: sets the bandwidth limits and sends them to the BlueFields.

IPsec — A protocol for encrypting network traffic at the IP layer. We used it for all inter-node encryption.

StrongSwan — An open-source IPsec implementation. We used it to access the DPU’s hardware crypto accelerator.

OVS (Open vSwitch) — Software that turns any machine with an OS into a network switch. We run it on the DPU to route host traffic and enforce per-destination rate limits.

OOB — Out-of-band. In this case it refers to a separate management network for SSH access, independent of the data network.

Choosing the tray-level limiting device:

- SmartNIC can’t enforce bandwidth limits, as they just take instructions from the host CPU.

- DPUs have their own CPU and OS to process bandwidth controls

| Approach | Host can’t bypass | Per-node | Programmable | Notes |

|---|---|---|---|---|

| SmartNIC | No | Yes | No | The SmartNIC accelerates crypto, but the host OS still configures which traffic gets encrypted, which keys to use, and what limits apply. |

| Switch-level enforcement (VLANs, ACLs, P4 switches) | Yes | Partly | Yes | The host doesn’t control the switch. Enforcement is centralized on the switch, not per-node. Only one compromised switch can bypass the limit. |

| Cloud SmartNICs (AWS Nitro, Azure Maia) | Yes | Yes | No | Managed by the cloud provider. You can’t SSH in, deploy scripts, or update configuration. |

| NVIDIA BlueField 3 DPU (ours) | Yes | Yes | Yes | The DPU runs its own OS and controls the crypto policy. The host can’t reconfigure keys, bypass encryption, or remove bandwidth limits. Configured programmatically by the controller. |

A comparison of various approaches to tray-level bandwidth limiting.

We chose NVIDIA Bluefield 3 DPUs and dropped them in place of the NICs in a server tray. The next section goes through a few notes of building the limiter, but here are the key stats — most of the difficulty was making it fast, rather than making it slow!

How we built it

We started by setting up a simple bandwidth-limiting system on the host CPUs rather than the DPUs, using tc. It worked, but was trivially bypassable by anything with root access to the host.

For the bandwidth limiter hosted on the DPU, we used OVS’s built-in ingress policing. It capped traffic but only per interface, not per destination. The solution was OVS metering. This gives us per-destination rate limits.

OVS metering — OVS feature for rate-limiting traffic per destination.

Setting up the BlueField DPUs

To give you a taste of the kinks we had to iron out to make the BF3s work as NICs, here are some examples:

- The BF3s shipped with a typo in the PCIe configuration parameters.

UPSTRAEMinstead ofUPSTREAM. This meant the PCIe settings couldn’t be configured until we flashed the latest firmware bundled with DOCA 2.10.0. - The exact SKU ended up being important. One of our BF3 cards turned out to be a “self-hosted” SKU, which has a fundamentally different operating model from DPU-mode cards. We only discovered this after days of debugging why the standard setup wasn’t working. NVIDIA directed us to a more suitable SKU after we explained what we’d been doing.

systemd-networkdrepeatedly hung on boot. We worked around this by disabling thenetwork-wait-onlineservice.

systemd-networkd — A Linux service that manages network configuration on boot.

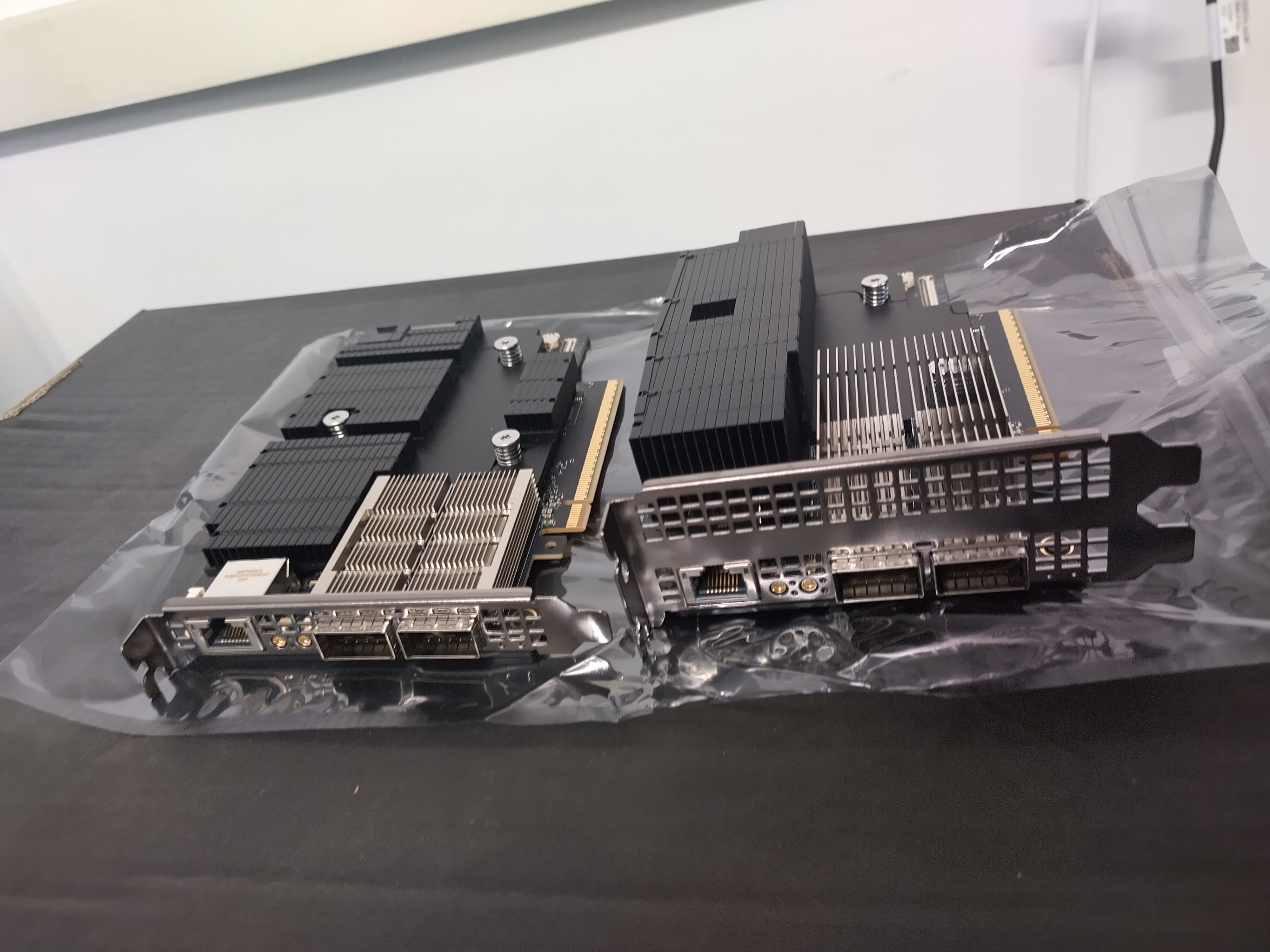

The difference in form factor between SKUs is just the heatsink size.

The difference in form factor between SKUs is just the heatsink size.

The quest for 400 Gbps

We wanted all inter-node traffic encrypted, with keys we could revoke on a security event. At 400 Gbps, encryption is hard. The DPU’s ARM cores are not fast enough for line-rate encryption in software. To hit those speeds, we needed the DPU’s hardware crypto accelerator, accessed via StrongSwan with hardware-offloaded IPsec.

We achieved 390 Gbps raw unencrypted throughput between the two DPUs — essentially line rate for 400G Ethernet with protocol overhead. For encrypted throughput, we reached 193 Gbps.

Getting here required NVIDIA to produce a patched BFB (firmware image) after their engineers identified a bug in the underlying Mellanox OFED (network driver stack) kernel driver. They set up clones of our system, ran weekly debugging sessions, and cut a custom driver patch.

The crypto hardware itself can handle more — the bottleneck is in the software layer that offloads packets to it. We are therefore optimistic that we can achieve full line speed with some further work with NVIDIA engineers.

Advice

We made a few decisions that made this work a lot easier:

- We got in touch with the BlueField team at NVIDIA. We were using the BlueField 3s in an atypical way, so we expected to have to iron out a bunch of kinks. They were excited to help out a team expanding the use case of their DPUs and were helpful when we eventually did run into (quite a few) kinks.

- We set up two separate networks. One for data, one for management. When you misconfigure the data network (and you will, repeatedly, as you are building a bandwidth limiter), you need to be able to SSH into every machine over a separate path.

- Installing Tailscale on the DPUs. NVIDIA were surprised we did this, but it worked without issues.

- Using DPDK testpmd for testing. We used it to benchmark raw throughput — IPsec testing was too slow without it.

What’s Next

We built this to resolve key uncertainties about DPUs as a security component. Can a DPU encrypt at near line rate? Yes — 193 Gbps with hardware-offloaded IPsec. Can it handle multi-peer encrypted tunnels while enforcing per-destination bandwidth limits? Yes.

If you want to build on this work, we’d love to hear from you. The bandwidth limiter is one feature — the DPU can support others like weight transfer policy enforcement, traffic classification, and network tapping. Let us know if you are interested in seeing this implemented — we can expand this prototype, or help you do it!

If you have other hardware uncertainties around AI security and verification — whether something is physically possible, how fast it can go, what the DPU can and can’t do — reach out.